It doesn't matter if you tell them or not. They have the film. So its not a secret.If I'm reading or watching BU's statements on 5 Out as the opposing coach, I'm playing D much like Oakland.

No driving lanes and tough 3's. Make Illini beat you in the mid-range, where BU has advertised he does not want. I guess I have trouble with telling your opponent that we want to be 2 dimensional on offense.

Thankfully, Tomi feasted in the mid-range on a night when some open 3's did not fall.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Illinois 66, Oakland 54 Postgame

- Status

- Not open for further replies.

Very True. Good luck convincing this board or the modern fan of that fact though.I understand analytics. My degrees revolve around analytics. My living revolves around analytics. I won national championships with analytics in another sport. I coached national champions with analytics.

I'm not saying we need radical change, maybe just a tweak. Game theory suggests having 3 levels of scoring is better than 2, because both teams have access to the same analytics and adjust strategies accordingly. In that situation, more options is better. I don't believe that's a controversial statement.

....Also relevant because some of our passing last night looked similar.

was definitely shaky

MDchicago

- Lake Norman NC

Ok, so what's different with NET? While they've never released their actual formula (don't get me started), which in my opinion is fairly ridiculous in its non-transparency for the NCAA approved metric that the postseason is supposed to be on, from empirical evidence, one thing seems very clear, and it's that those scoring limiters are either not included or severely needed meaning that margin of victory has a much much much higher role in ranking than it used to.

Hence, in NET, clownstomping the worst team in college basketball by 60pts is worth way more than beating a 250th ranked team by 20. So while strategy used to be avoid 300+ ranked teams like the plague, NET actually encourages playing them and running up the score and then similarly, playing top 25 teams in the rest of your non-con to bolster opponent efficiency numbers while not having to worry about a non Q1 loss in the non-con.

TLDR: In summary, Brad was advised well in the NET era of how to schedule, and it is a departure from what we knew as fact even a few years ago. Also, NET is complete and utter trash, and the reason they don't release their formulas is so they can still adjust them behind the scenes and such that the stats community doesn't laugh it out of existence

Not a defender in any respect of the NCAA or the NET, but do recall reading (e.g., the Andy Katz article linked below) that NET ratings cap the impact of scoring margin at 10 points per game. Not sure if that has changed, but Chat GPT still mentions 10 point scoring margin cap in its description of the NET.

Regardless, for scheduling purposes, I think that programs that view themselves as good enough to win multiple games in the tournament are better served to

schedule competition that will challenge them and make them better by providing meaningful opportunities for growth and experience, rather than building a non-con schedule around trying to game the NET by playing cupcakes. Would feel differently if I was a coach trying to save my job or thought my team was marginal/on the bubble.

Katz link

<<<The NCAA Evaluation Tool, or NET, will be the new barometer for the committee, and it will include game results, strength of schedule, game location, scoring margin (capping at 10 points per game), and net offensive and defensive efficiency.

The committee did consider using the game date, an uncapped scoring margin, distance traveled and days of rest before a game but decided against using these in the equation.

“This will have the components of all the metrics,’’ said Gonzaga coach Mark Few, one of the coaches from the National Association of Basketball Coaches who was a consultant on the project. “This will prevent the outliers,” Few said. “There were teams and leagues that were able to trick the RPI, either intentionally or unintentionally. We have all the technology and analytics, and it was silly not to use it...>>>

Yes but offense and defense efficiency are uncappedNot a defender in any respect of the NCAA or the NET, but do recall reading (e.g., the Andy Katz article linked below) that NET ratings cap the impact of scoring margin at 10 points per game. Not sure if that has changed, but Chat GPT still mentions 10 point scoring margin cap in its description of the NET.

Regardless, for scheduling purposes, I think that programs that view themselves as good enough to win multiple games in the tournament are better served to

schedule competition that will challenge them and make them better by providing meaningful opportunities for growth and experience, rather than building a non-con schedule around trying to game the NET by playing cupcakes. Would feel differently if I was a coach trying to save my job or thought my team was marginal/on the bubble.

Katz link

<<<The NCAA Evaluation Tool, or NET, will be the new barometer for the committee, and it will include game results, strength of schedule, game location, scoring margin (capping at 10 points per game), and net offensive and defensive efficiency.

The committee did consider using the game date, an uncapped scoring margin, distance traveled and days of rest before a game but decided against using these in the equation.

“This will have the components of all the metrics,’’ said Gonzaga coach Mark Few, one of the coaches from the National Association of Basketball Coaches who was a consultant on the project. “This will prevent the outliers,” Few said. “There were teams and leagues that were able to trick the RPI, either intentionally or unintentionally. We have all the technology and analytics, and it was silly not to use it...>>>

I thought the same thing. It was obvious that we don’t practice any of the shots that they were forcing us to take. Lots of bricks. We just looked uncomfortable all night. Good to see us find a way on a tough night though.If I'm reading or watching BU's statements on 5 Out as the opposing coach, I'm playing D much like Oakland.

No driving lanes and tough 3's. Make Illini beat you in the mid-range, where BU has advertised he does not want. I guess I have trouble with telling your opponent that we want to be 2 dimensional on offense.

Thankfully, Tomi feasted in the mid-range on a night when some open 3's did not fall.

ptgrd23

- orange county CA

Illini defense designed to prevent 3's and dunks. Force opponents to shoot mid range jumpers. Oakland did this to us with their zone.

We did not punish them on the boards. We had too more turnovers trying difficult interior passes and being sloppy with the ball on the perimeter. Somebody besides Tomas has to score inside. Last year Quincy would drive from corner, Marcus and Dain would post up. Maybe we missed Ty.

We also did not fast break. Last year TSJ would push the ball up on the court at every opportunity. Brad should have been all over the team for not throwing the outlet pass and getting easy fast break buckets.

Great job by Brad to schedule Oakland. We are lucky that Jack Golke graduated or we may have been looking at a home loss

We did not punish them on the boards. We had too more turnovers trying difficult interior passes and being sloppy with the ball on the perimeter. Somebody besides Tomas has to score inside. Last year Quincy would drive from corner, Marcus and Dain would post up. Maybe we missed Ty.

We also did not fast break. Last year TSJ would push the ball up on the court at every opportunity. Brad should have been all over the team for not throwing the outlet pass and getting easy fast break buckets.

Great job by Brad to schedule Oakland. We are lucky that Jack Golke graduated or we may have been looking at a home loss

Illini defense designed to prevent 3's and dunks. Force opponents to shoot mid range jumpers. Oakland did this to us with their zone.

We did not punish them on the boards. We had too more turnovers trying difficult interior passes and being sloppy with the ball on the perimeter. Somebody besides Tomas has to score inside. Last year Quincy would drive from corner, Marcus and Dain would post up. Maybe we missed Ty.

We also did not fast break. Last year TSJ would push the ball up on the court at every opportunity. Brad should have been all over the team for not throwing the outlet pass and getting easy fast break buckets.

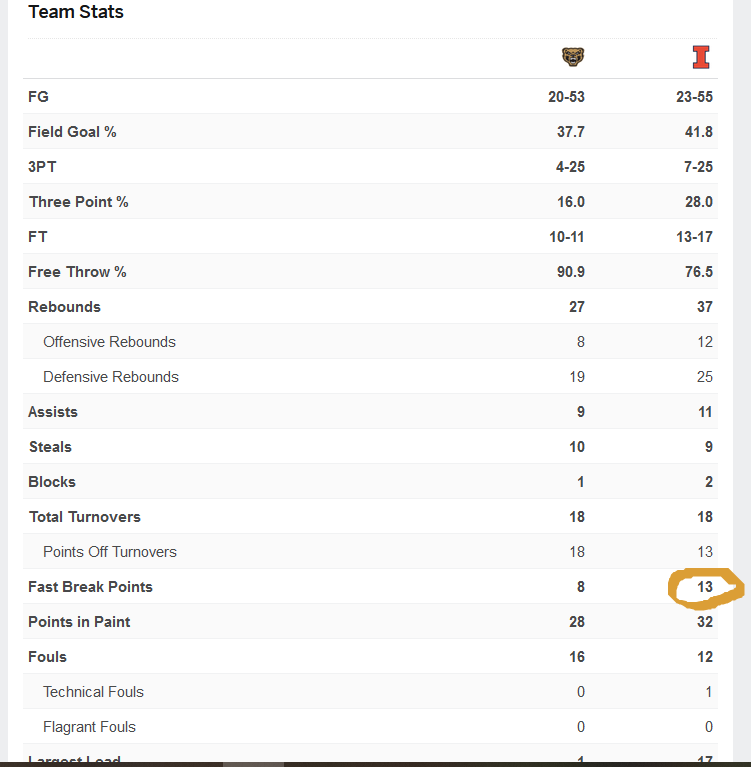

View attachment 37318

Great job by Brad to schedule Oakland. We are lucky that Jack Golke graduated or we may have been looking at a home loss

Thing that sticks out to me in that game graphic is 32 points in the paint, 7 threes (and 13 free throws). I think that's two games in a row with zero mid-range points scored?

If we land KJ first would Kylan even have been a take?

Early on NET did include margin of victory as an additional input which they did state they capped, however they deemed it redundant in 2020 and removed it. And while margin of victory is indeed already included in efficiency metrics (it's scaled by number of possessions), this change made NET more volatile and made blowouts worth even more than they already were.Not a defender in any respect of the NCAA or the NET, but do recall reading (e.g., the Andy Katz article linked below) that NET ratings cap the impact of scoring margin at 10 points per game. Not sure if that has changed, but Chat GPT still mentions 10 point scoring margin cap in its description of the NET.

Regardless, for scheduling purposes, I think that programs that view themselves as good enough to win multiple games in the tournament are better served to

schedule competition that will challenge them and make them better by providing meaningful opportunities for growth and experience, rather than building a non-con schedule around trying to game the NET by playing cupcakes. Would feel differently if I was a coach trying to save my job or thought my team was marginal/on the bubble.

Katz link

<<<The NCAA Evaluation Tool, or NET, will be the new barometer for the committee, and it will include game results, strength of schedule, game location, scoring margin (capping at 10 points per game), and net offensive and defensive efficiency.

The committee did consider using the game date, an uncapped scoring margin, distance traveled and days of rest before a game but decided against using these in the equation.

“This will have the components of all the metrics,’’ said Gonzaga coach Mark Few, one of the coaches from the National Association of Basketball Coaches who was a consultant on the project. “This will prevent the outliers,” Few said. “There were teams and leagues that were able to trick the RPI, either intentionally or unintentionally. We have all the technology and analytics, and it was silly not to use it...>>>

And the Mark Few comment is indeed correct, there were ways to take advantage of the shortfalls of rpi/sos, and there are ways to take advantage of the shortfalls of efficiency stats, and smart teams took advantage. I detailed those shortfalls in my post. That said, NET has it's own shortfalls, and teams and coaches are currently taking advantage of it. Now whether they benefit your team outside of seeding purposes is another question...

So long and the short of it, NET no longer uses margin of victory as a separate input. Raw efficiency though is still included which is just your margin of victory scaled by number of possessions and then it's compared to your expected efficiency margin against said team. The better actual is vs expected, the more you rise, the worse, the more you fall.

Why wouldn't the Illini want the second best guard on the team?If we land KJ first would Kylan even have been a take?

Of course. Would Boswell have come is another question.If we land KJ first would Kylan even have been a take?

lstewart53x3

- Scottsdale, Arizona

From everything I’ve read, I agree with every word of this.So one important thing to note here, is that going to NET has created a significant shift to what is statistically optimal when it comes to scheduling and while I unfortunately don't have inner knowledge of the NET iterative formulas, just based off of empirical data the past few years, it appears Brad has the correct approach at least statistically speaking. So what is my reasoning for this? Well I'll try to be as brief as I can

Years ago, when SOS and RPI were the primarily tools of the selection committee, basically the really bad noncon teams on your schedule were millstones around your neck (this makes sense as almost all teams you play in conference are above .500 teams whose bulk of their schedules are against .500 teams). As such, because each game was equal weight, the worst teams you played served as outliers that absolutely tanked your SOS and RPI such that playing 300+ ranked teams was a no win situation.

On a somewhat similar note, when it came to efficiency based iterative systems like Kenpom (the one I am more intimately familiar with), Ken had to make a decision on how to deal with blowout games and runaway scores. The choice was to either to use unaltered efficiency stats (think margin of victory for the purpose of this explanation as it's a bit easier to wrap ones mind around) however this would actually result in blowouts being outliers for efficiency and as such would actually mean those games have higher relative weight than other games played on the schedule. Or he could limit and damp efficiency for high margin of victory games. He ultimately decided to damp where basically winning by more than about 30pts starts having extreme diminishing returns where say winning by 70 will have almost the exact same efficiency as winning by 40. Why? Because after a team is up by over 30, score effects start taking place- coaches empty benches, play style changes, etc. etc. As such again, playing 300+ ranked teams served basically no net benefit as since you were already expected to beat them by 30pts and you were capped at beating them at about 30pts, playing them couldn't improve your efficiency ratings, but they certainly could nuke them if you only won by say 10-15. In fact this was a known "issue" and it's why teams tried doing whatever they could to not schedule these type of games as scheduling a team ranked in the 225-275 range probability wise has just about the same likelihood of blowout without the negative effects.

Ok, so what's different with NET? While they've never released their actual formula (don't get me started), which in my opinion is fairly ridiculous in its non-transparency for the NCAA approved metric that the postseason is supposed to be on, from empirical evidence, one thing seems very clear, and it's that those scoring limiters are either not included or severely needed meaning that margin of victory has a much much much higher role in ranking than it used to.

Hence, in NET, clownstomping the worst team in college basketball by 60pts is worth way more than beating a 250th ranked team by 20. So while strategy used to be avoid 300+ ranked teams like the plague, NET actually encourages playing them and running up the score and then similarly, playing top 25 teams in the rest of your non-con to bolster opponent efficiency numbers while not having to worry about a non Q1 loss in the non-con.

TLDR: In summary, Brad was advised well in the NET era of how to schedule, and it is a departure from what we knew as fact even a few years ago. Also, NET is complete and utter trash, and the reason they don't release their formulas is so they can still adjust them behind the scenes and such that the stats community doesn't laugh it out of existence

What remains unclear to me is how much your own NET rating matters vs NET simply being a tool to measure who you’ve beaten and lost to.

I personally think Brad should tell everyone in every press conference that we PREFER ONLY mid range jumpers! Then we’d get all the open layups and 3s we want!I understand analytics. My degrees revolve around analytics. My living revolves around analytics. I won national championships with analytics in another sport. I coached national champions with analytics.

I'm not saying we need radical change, maybe just a tweak. Game theory suggests having 3 levels of scoring is better than 2, because both teams have access to the same analytics and adjust strategies accordingly. In that situation, more options is better. I don't believe that's a controversial statement.

So on 2...sounds like you are missing Terrence Shannon (elite at rushing ball...)A couple things I noticed:

1) There needed to be more drives to the basket. And, when they did drive they tended to stop short favoring those difficult floaters instead of taking the ball all the way to the basket where they would likely have drawn a foul.

2) They need to push the ball up the court more aggressively especially after rebounds.

In general, your points make sense. But when you put them in the context of last year’s game against Oakland — some, not so much. Last year we out rebounded Oakland 36 to 32; this year 37 to 27. This year as you point out, we had 13 fast break points. Last year with TSJ pushing “the ball up the court every opportunity,” we had 6. Bottom line we beat them by 11 last year with Gohlke (only had 6 points in 37 minutes) and by 12 this year. Some opponent’s playing style impacts a game more than others.Illini defense designed to prevent 3's and dunks. Force opponents to shoot mid range jumpers. Oakland did this to us with their zone.

We did not punish them on the boards. We had too more turnovers trying difficult interior passes and being sloppy with the ball on the perimeter. Somebody besides Tomas has to score inside. Last year Quincy would drive from corner, Marcus and Dain would post up. Maybe we missed Ty.

We also did not fast break. Last year TSJ would push the ball up on the court at every opportunity. Brad should have been all over the team for not throwing the outlet pass and getting easy fast break buckets.

View attachment 37318

Great job by Brad to schedule Oakland. We are lucky that Jack Golke graduated or we may have been looking at a home loss

I think that guy could be Will Riley. He doesn't have the body yet, but he has the length and control to get downhill. Sadly we probably won't see year 2 of him when he could build that athleticism and explosiveness he would need.So on 2...sounds like you are missing Terrence Shannon (elite at rushing ball...)

Not many teams play zone or even practice it.If I'm reading or watching BU's statements on 5 Out as the opposing coach, I'm playing D much like Oakland.

No driving lanes and tough 3's. Make Illini beat you in the mid-range, where BU has advertised he does not want. I guess I have trouble with telling your opponent that we want to be 2 dimensional on offense.

Thankfully, Tomi feasted in the mid-range on a night when some open 3's did not fall.

Technically you’re right….but the mid range level of scoring becomes obsolete because the way offenses are designed now and howI understand analytics. My degrees revolve around analytics. My living revolves around analytics. I won national championships with analytics in another sport. I coached national champions with analytics.

I'm not saying we need radical change, maybe just a tweak. Game theory suggests having 3 levels of scoring is better than 2, because both teams have access to the same analytics and adjust strategies accordingly. In that situation, more options is better. I don't believe that's a controversial statement.

They run action get you open 3’s and shots at the rim…which have way higher ROI. Open 3s> Open 15 footers.

Illini defense designed to prevent 3's and dunks. Force opponents to shoot mid range jumpers. Oakland did this to us with their zone.

Only counterpoint I'd make is to the first sentence/statement.

We scored all of our points either in the paint, from the free throw line or from the three point line. I don't recall even one mid-range jump shot from an Illinois player in this game.

He's not quite saying that, haha, but this is the actual argument he's making:I don’t have any of your credentials, but you seem to be saying that the addition of a lower value to two higher values raises the mean…

Me:

Sometimes adding a 3rd variable, while a worse individual option in a bubble, results in the first 2 options becoming more successful simply through it's introduction that it's actually better overall.

Imagine Illinois only shoots latups or threes and the shoot them at the following clips:

Layups (40%of shots): 57% shooting

Threes (60% of shots): 36% shooting

So overall, you expect 2pts*.57*.4×3pts*.36*.6.= 1.10pts per shot on average.

Now let's introduce those inefficient midrange shots at say a 48% shooting percentage. You seldom do it, only 10% of the time, but in doing so your opponents are forced to defend it and , and as such your 3pt and layup shooters are getting more space and better looks so now you're hitting layups at 61% instead of 57% and threes at 40% instead of 36%. Your shooting now looks like this:

Layups (37% of shots): 61%

Midrange (10% of shots): 48%

Threes (53% of shots): 40%

So now you'd expect 2pts*.61*.37+2pts*.48*.1+3pts*.4*.53= 1.18pts per shot on average

Again, these are fake numbers, but sometimes adding something that by itself is less efficient actually increases overall efficiency by making your other parts more efficient than they previously were.

If I could give a real world example of this, it'd be something like spending time writing and following detailed work instructions for an assembly installation. It's inefficient writing them as it costs time, however, it allows your workers to complete the overall job faster with less mistakes and rework such that it more than makes up for the time spent creating these instructions. Hope that explains things a bit better.

We should also take more half court shots to create even more space for closer in threes and layupsHe's not quite saying that, haha, but this is the actual argument he's making:

Sometimes adding a 3rd variable, while a worse individual option in a bubble, results in the first 2 options becoming more successful simply through it's introduction that it's actually better overall.

Imagine Illinois only shoots latups or threes and the shoot them at the following clips:

Layups (40%of shots): 57% shooting

Threes (60% of shots): 36% shooting

So overall, you expect 2pts*.57*.4×3pts*.36*.6.= 1.10pts per shot on average.

Now let's introduce those inefficient midrange shots at say a 48% shooting percentage. You seldom do it, only 10% of the time, but in doing so your opponents are forced to defend it and , and as such your 3pt and layup shooters are getting more space and better looks so now you're hitting layups at 61% instead of 57% and threes at 40% instead of 36%. Your shooting now looks like this:

Layups (37% of shots): 61%

Midrange (10% of shots): 48%

Threes (53% of shots): 40%

So now you'd expect 2pts*.61*.37+2pts*.48*.1+3pts*.4*.53= 1.18pts per shot on average

Again, these are fake numbers, but sometimes adding something that by itself is less efficient actually increases overall efficiency by making your other parts more efficient than they previously were.

If I could give a real world example of this, it'd be something like spending time writing and following detailed work instructions for an assembly installation. It's inefficient writing them as it costs time, however, it allows your workers to complete the overall job faster with less mistakes and rework such that it more than makes up for the time spent creating these instructions. Hope that explains things a bit better.

- Status

- Not open for further replies.